Welcome back to the Series!

Continuing our ML/DL interview prep, we now move from Linear Regression to its classification counterpart—Logistic Regression.

This post will break down:

✅ Conceptual understanding of logistic regression.

✅ Applied techniques for real-world challenges.

✅ System design considerations.

✅ Common interview questions (with solutions!).

Let’s dive in!

1️⃣ Conceptual Understanding

What is Logistic Regression?

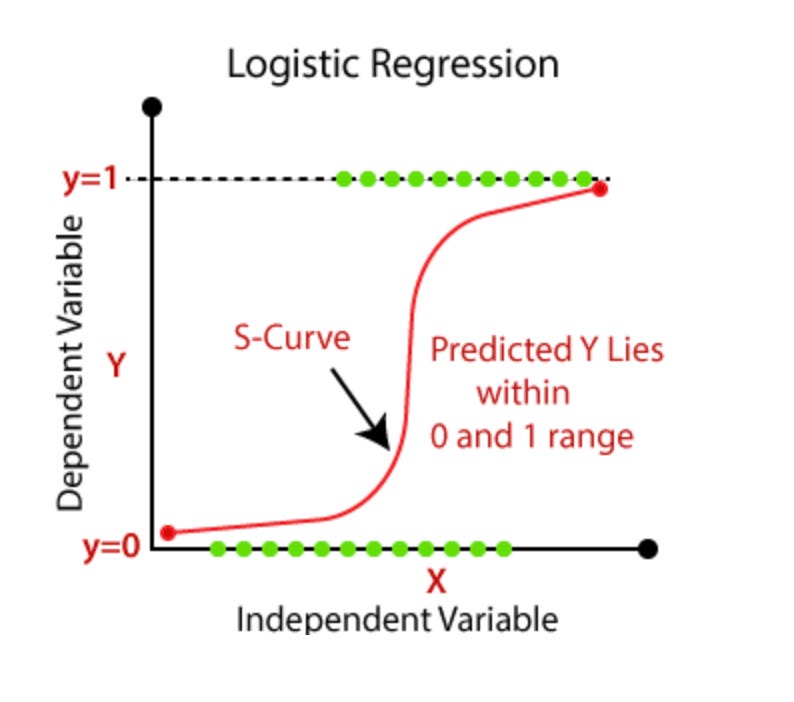

Despite its name, Logistic Regression is a classification algorithm. It predicts categorical outcomes by modeling the probability of an event using the logistic (sigmoid) function:

Output is bounded between 0 and 1, making it suitable for binary classification.

The decision boundary is linear, but probabilities allow for interpretable predictions.

Derivation of cross entropy loss (Maths heavy optional):

Step 1: Probability Model for Logistic Regression

In Logistic Regression, we predict the probability that y=1y = 1 using the sigmoid function:

For binary classification (y∈{0,1}y \in \{0,1\}), we express the probability of any outcome using:

Step 2: Likelihood Function (Using Product Notation)

Given a dataset with nn samples (x_1, y_1), (x_2, y_2), ..., (x_n, y_n), we assume all samples are independent.

Thus, the likelihood function (probability of observing all labels correctly) is:

Substituting the probability formula:

Step 3: Log-Likelihood Function

Since products are hard to optimize, we take the log (which converts products into sums):

Step 4: Defining Log Loss (Negative Log-Likelihood)

MLE aims to maximize this function. However, machine learning optimizers work by minimizing a loss function.

Thus, we negate the log-likelihood to define the Log Loss (Binary Cross-Entropy Loss):

This measures how well the predicted probabilities y^i align with the actual labels y.

Why Cross-Entropy Loss Instead of MSE?

MSE leads to a non-convex loss function, making gradient descent harder to optimize.

Cross-Entropy Loss is convex, ensuring faster and stable convergence.

MSE (Mean Squared Error) treats errors symmetrically, but logistic regression involves probabilities—errors should be penalized based on confidence.

Cross-entropy loss (log loss) is derived from maximum likelihood estimation (MLE), ensuring better convergence and more confident predictions.

Advantages of Logistic Regression

Simple & Interpretable – Provides clear insights into how features influence predictions.

Fast Training & Inference – Computationally efficient for small to medium datasets.

Probability Outputs – Unlike SVMs, it provides confidence scores.

Limitations of Logistic Regression

Assumes a linear relationship between features and log-odds.

Not robust to outliers – Decision boundaries can shift significantly.

Struggles with highly correlated features – Multicollinearity reduces interpretability.

2️⃣ Applied Perspective

Where is Logistic Regression Used?

Medical Diagnosis – Predicting whether a patient has a disease based on symptoms

Spam Classification – Determining if an email is spam or not.

Credit Scoring – Assessing the probability of loan default.

Marketing Campaigns – Predicting whether a customer will purchase a product.

Handling Imbalanced Data

Interviewers love asking how you’d handle class imbalance (e.g., detecting fraud with 99% non-fraud cases).

✅ Class Weights: Assign higher weight to the minority class using class_weight='balanced' in sklearn.

✅ Oversampling & Undersampling: Use SMOTE (Synthetic Minority Over-sampling Technique) or downsample majority class.

✅ Threshold Tuning: Adjust decision threshold based on precision-recall tradeoff instead of default 0.5.

📌 Example Interview Question:

"What techniques would you use if you have 98% negative samples in a binary classification problem?"

One-vs-Rest (OvR) vs. Softmax Regression

For multi-class classification, logistic regression extends in two ways:

1️⃣ One-vs-Rest (OvR): Train separate binary classifiers for each class vs. all others.

2️⃣ Softmax Regression: A single model predicts probability distribution across multiple classes using the softmax function.

When to use what?

OvR works well when one class dominates (e.g., fraud detection).

Softmax is better when all classes are balanced and interrelated (e.g., classifying handwritten digits).

3️⃣ System Design Angle

Deploying Logistic Regression in Production

In real-world ML systems, logistic regression is widely used due to its simplicity, interpretability, and efficiency. But there are challenges:

Feature Engineering:

Handling categorical variables (one-hot encoding, target encoding).

Scaling numerical features (standardization).

Model Updating:

Streaming data? Use online learning (SGD updates) instead of retraining from scratch.

Detect concept drift (when data distribution changes over time).

Interview Questions (Try First!)

Interview Questions

Here are some important and tricky Logistic Regression questions that often come up in ML interviews. Try answering them before checking the solutions!

1️⃣Why is Logistic Regression still considered a linear model even though it uses the sigmoid function?

2️⃣What are other metrics used apart from accuracy in Logistic Regression?

3️⃣ How do you interpret the coefficients β0 and β1 in Logistic Regression?

4️⃣ What happens if the features in Logistic Regression are highly correlated?

5️⃣ How do you handle an imbalanced dataset in Logistic Regression?

6️⃣ What are the limitations of Logistic Regression, and when would you choose a different model?

7️⃣What happens if you fit Logistic Regression on data that doesn’t follow the linear decision boundary assumption?

Solutions

Q1: Why is Logistic Regression still considered a linear model even though it uses the sigmoid function?

Answer: Logistic Regression is linear in the sense that the decision boundary is a linear function of the input features. The sigmoid function transforms the output into probabilities, but the boundary where P(y=1)=0.5 remains a linear equation:

Unless we use polynomial features or feature transformations, Logistic Regression does not model non-linear relationships.

Q2:What are other metrics used apart from accuracy in Logistic Regression?

Answer: Accuracy is not always reliable, especially for imbalanced datasets. Other important metrics include:

Precision – Measures how many of the predicted positives are actually correct.

Recall – Measures how many actual positives were correctly predicted.

F1-score – Harmonic mean of precision and recall, useful for imbalanced data.

Log Loss (Cross-Entropy Loss) – Evaluates how well the predicted probabilities match actual labels.

AUC-ROC (Area Under the Curve - Receiver Operating Characteristic) – Measures model discrimination ability.

AUC-PR (Precision-Recall Curve) – More useful when dealing with imbalanced datasets.

Q3: How do you interpret the coefficients β0and β1 in Logistic Regression?

Answer:

β0 (intercept) represents the base log-odds when all features are zero.

β1 (coefficient) indicates how a unit change in the feature affects the log-odds of the positive class.

Q4: What happens if the features in Logistic Regression are highly correlated?

Answer: Multicollinearity causes instability in coefficient estimation, leading to high variance. This can be mitigated using:

Feature selection

Dimensionality reduction (PCA)

Regularization (Lasso,Ridge Regression)

Q5: How do you handle an imbalanced dataset in Logistic Regression?

Answer:

Resampling techniques: Oversampling the minority class or undersampling the majority class.

Adjusting the decision threshold: Instead of 0.5, optimize based on Precision-Recall tradeoff.

Using class weights: Assign higher weights to the minority class during training.

Q6: What are the limitations of Logistic Regression, and when would you choose a different model?

Answer:

Assumes a linear decision boundary (struggles with non-linearity).

Sensitive to outliers.

Requires manual feature engineering.

If non-linearity is an issue, Decision Trees, SVMs, or Neural Networks are better alternatives.

Q7:What happens if you fit Logistic Regression on data that doesn’t follow the linear decision boundary assumption?

Answer:

Logistic Regression assumes a linear relationship in log-odds space. If this assumption fails, the model:

Struggles to classify correctly, leading to high bias.

Fails to capture interactions or curvature in data.

Solutions:

Add polynomial features (e.g., x^2).

Use kernelized methods (SVM with a radial basis function kernel).

Switch to non-linear models like decision trees or neural networks.

Bonus Tip: When NOT to Use Logistic Regression

If your dataset is highly imbalanced, consider tree-based models or deep learning.

If relationships between features are non-linear, logistic regression might underperform.

If interpretability isn’t a priority, neural networks might be better suited.

What’s Next?

In our next post, we’ll take the discussion further by exploring Decision Trees—a powerful alternative to Logistic Regression for classification problems. We’ll break down how trees split data, handle non-linearity, and compare their strengths and weaknesses against Logistic Regression. Expect practical insights and tricky interview questions. Stay tuned!

References & Further Reading

For those who want to explore further, here are some useful resources:

Thank you for reading! Have you faced any challenges with Logistic Regression? Or is there a specific topic you’d like me to cover? Let’s discuss in the comments.

Happy learning!

I think it covers all things related to logistic regression. But the level of math and formulas can intimidate beginner , I can't understand after formula for log loss